Prompt Engineering

Prompt engineering is rapidly becoming one of the most important skills in the age of large language models (LLMs). Whether you’re a marketer, developer, content creator, or aspiring AI-prompt engineer, mastering how to craft effective prompts gives you a competitive edge. In this blog we’ll explore everything from LLM prompting to advanced techniques, career implications, applications for content creation, best practices, and current trends.

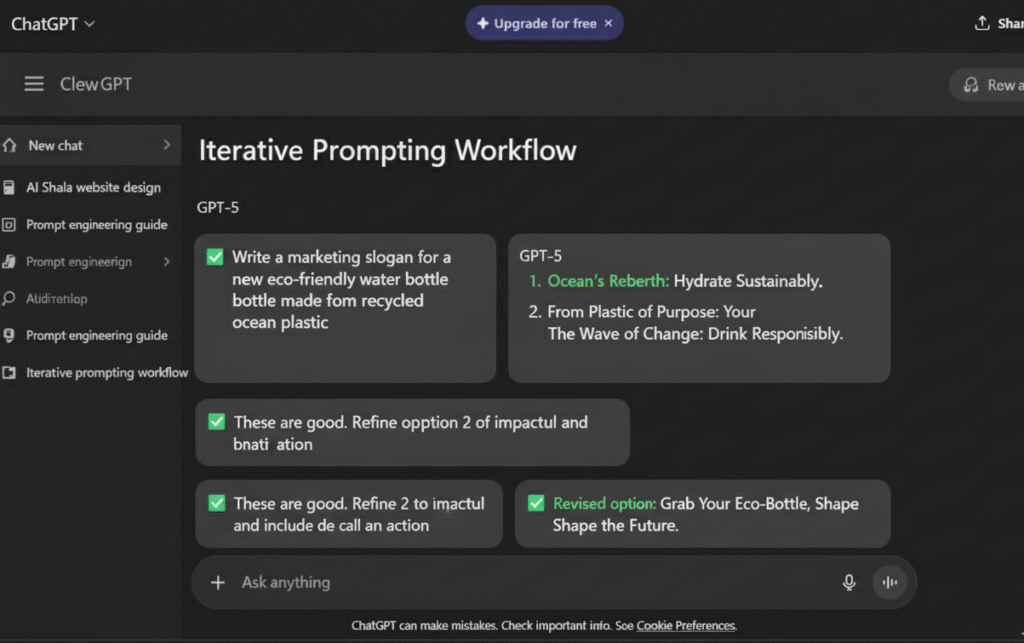

LLM Prompting (Large Language Model Prompting)

“LLM prompting” refers to the process of giving a large language model (like GPT‑4, Gemini, etc.) an instruction or input (“prompt”) so that it produces a useful output.

Key points:

- The prompt is more than just a question: it defines the task, context, format, and desired output.

- Because LLMs are trained on massive text corpora, they respond best when the prompt clearly defines role, context, and constraints.

- As models evolve (longer context windows, multimodal inputs), prompt engineering evolves too: you can include longer documents, images, videos, and more detailed instructions.

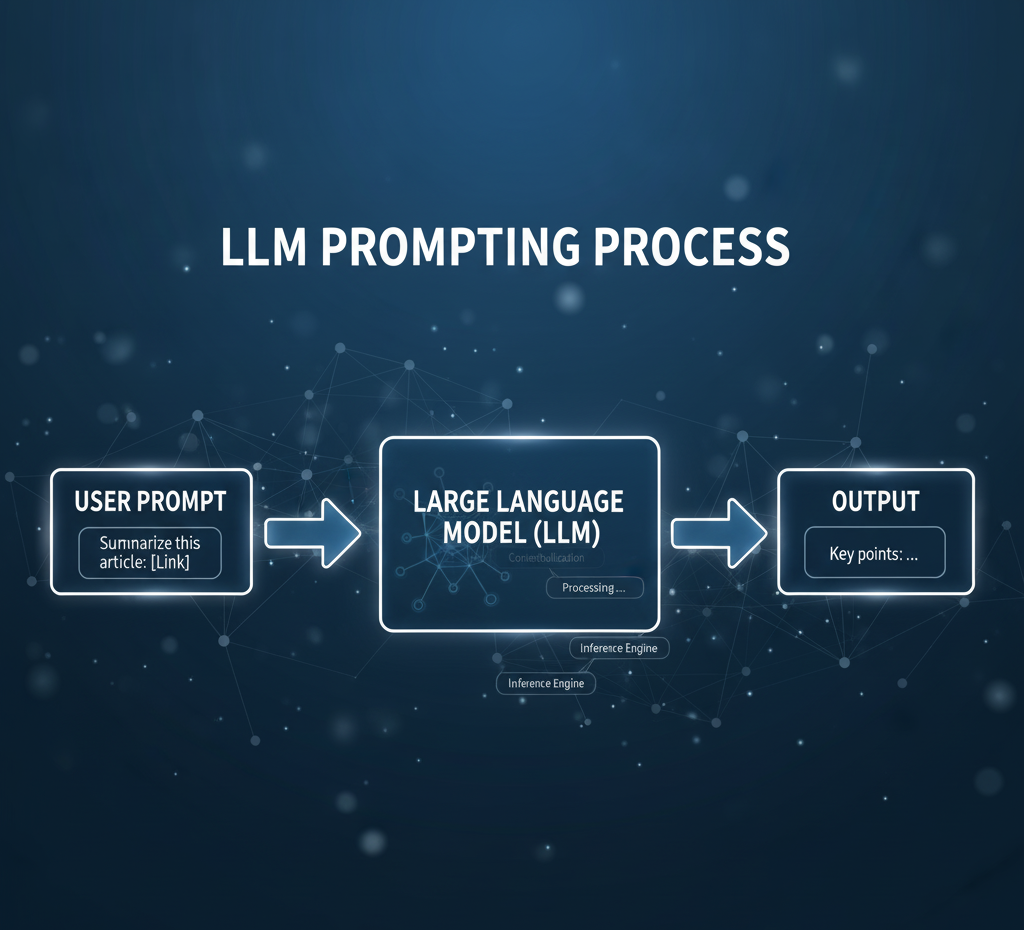

ChatGPT Prompting (or Prompting GPT-5/Gemini)

When we refer to “ChatGPT prompting” (or future models like GPT-5, Gemini, etc.), we emphasise interaction with conversational models, where you may play the role of “user” and the model acts like “assistant.”

Important aspects:

- System prompt / assistant role: In tools like the ChatGPT interface you can set system instructions (e.g. “You are a friendly sports-analyst specialising in football tactics”).

- Turn-based prompting: You can reference previous conversation turns, refine prompts, ask follow-up questions, correct the model, etc.

- Because chat-style models are interactive, good prompting involves not just the first prompt but how you iterate, steer the model’s responses and refine them.

For example: “You are an experienced football tactical journalist. Please analyse the recent Real Madrid 4-3-3 formation vs opponent X in 500 words, using headings and bullet points.” Then you may ask follow-up questions: “Now summarise key weaknesses and propose two alternative formations.”

AI Prompt Engineer (targeting career interest)

As the field grows, “AI Prompt Engineer” is emerging as a career path. Below are what you should know if you’re considering it.

Do I need to be a programmer to be an AI Prompt Engineer?

Not necessarily, but having programming or data skills is a strong advantage.

- A prompt engineer needs strong language skills, logic, domain knowledge, task-understanding and the ability to refine prompts by testing.

- If you are integrating prompts into apps, pipelines or working with APIs (e.g. OpenAI, Azure OpenAI, or embeddings + retrieval systems) then programming (Python, R, even Excel for simple cases) helps.

- In many organisations, the role might also encompass: defining prompt templates, prompt libraries, tweaking system prompts and fine-tuning workflows , which may require some technical fluency.

- For a marketing/content-creation path you may lean more on domain + creative skills; for an enterprise/engineering path you’ll lean more on programming, data and system integration.

What skills are needed?

- Understanding of how LLMs work (the fundamentals)

- Prompt-design: defining role, task, context, format (see later section)

- Ability to test, evaluate, iterate, measure outputs (A/B testing prompts)

- Domain expertise (e.g., marketing, HR, CRM, content, legal) plus ethical awareness

- Awareness of tool-ecosystem: prompt libraries, templates, retrieval systems, embeddings, vector DBs

- (For advanced) Some programming/API knowledge for integrating prompts into workflows or building Retrieval-Augmented Generation (RAG) systems.

Why this role is relevant now

- As more enterprises adopt LLMs, they need people who can bridge domain experts and model usage.

- Prompt engineering helps reduce reliance on costly fine-tuning, and enables “prompt-first” workflows that leverage existing models.

- With the rapid evolution of model capabilities (e.g., larger context windows, multimodal inputs) prompt engineers add value in designing scalable prompt systems.

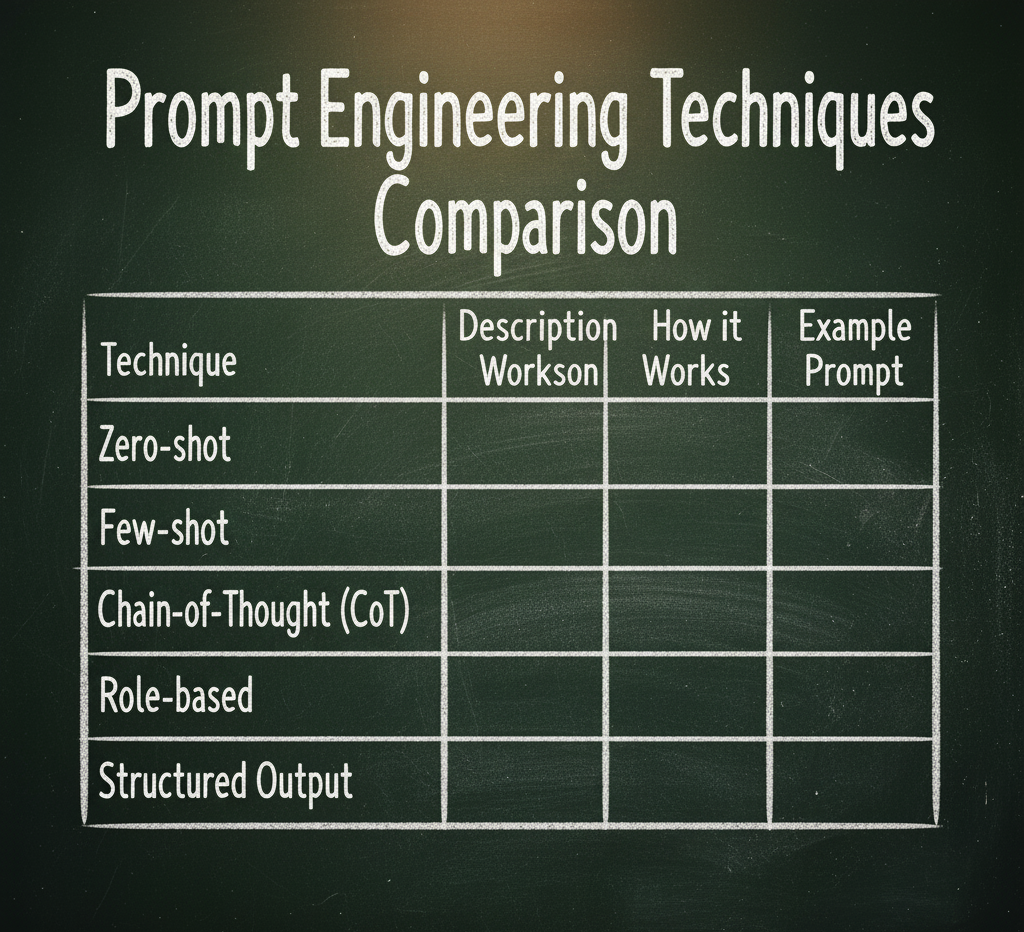

Prompt Engineering Techniques

Prompt engineering techniques are methods and patterns to get better output from LLMs.

Some of the basic and widely-used techniques:

- Zero-Shot Prompting: you ask the model with no examples, only an instruction (e.g., “Summarise the following text”).

- Few-Shot Prompting: you include a few example input/output pairs in the prompt so the model sees how you want it done.

- Chain-of-Thought Prompting (CoT): you instruct the model to “think step-by-step” or include reasoning steps (covered in more detail in its own section). (DataCamp)

- Role/Instruction Prompts: set the model’s role (“You are an expert marketer”) + specify task, context and format.

- Structured Output Prompts: you ask for output in JSON, YAML or tables (covered later).

- Prompt Templates & Libraries: using predefined templates for common tasks (e.g., marketing copy, SEO meta description, email responses).

- Self-Correction / Self-Verification: asking the model to critique its own output, refine it, or verify facts.

These techniques form the foundation of smart prompt engineering.

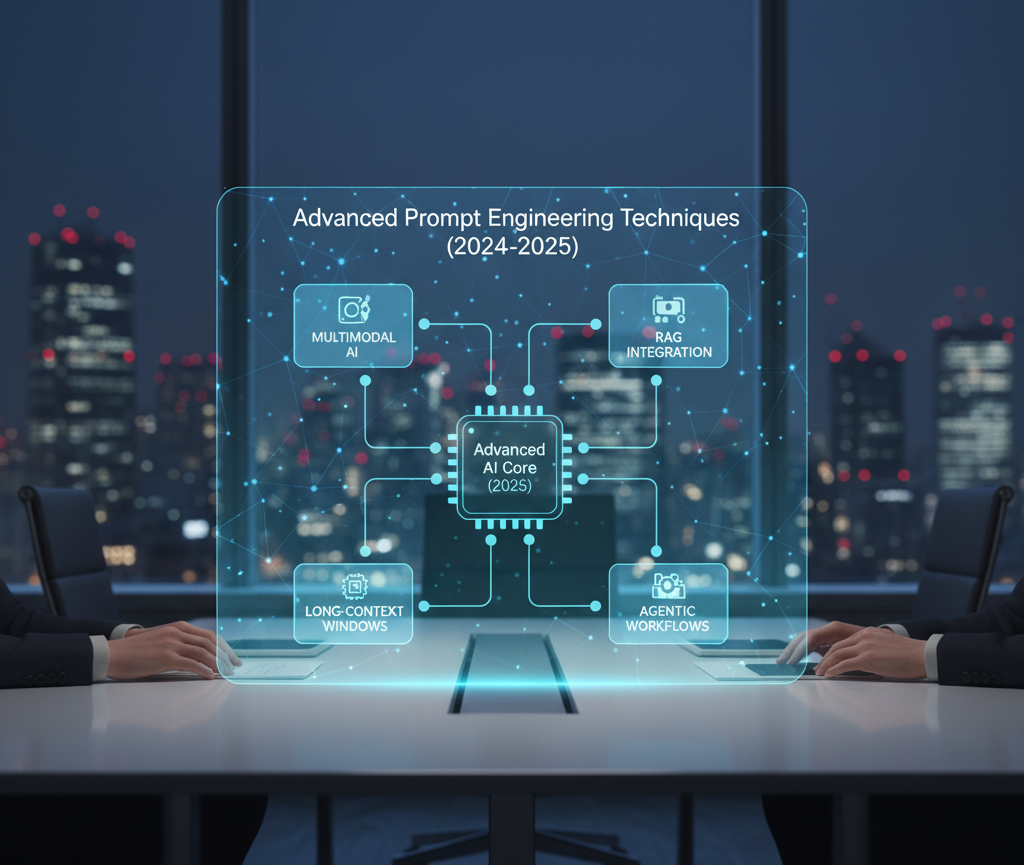

Advanced Prompt Engineering Techniques 2025

As LLMs become more powerful and models allow longer context windows (thousands of tokens) and multimodal inputs (text + image + maybe video), prompt engineering must evolve. Here are advanced techniques for 2024:

Growing context windows

Newer models permit much longer prompt/context lengths. That means you can feed the LLM entire documents, long chat histories, or combine multiple sources, and then ask sophisticated tasks. This enables: tailoring prompts with rich context, retrieving large external knowledge and embedding it into a prompt, chaining multiple operations in one prompt.

Multimodality

Prompt engineering is no longer only about text. With models that accept image, video, audio inputs (or mixed inputs) you can craft prompts like: “Here is a football match screenshot. Analyse the positioning of Real Madrid’s left winger. Provide three tactical insights.” The prompt must now reference visual context, define how the model should interpret it, and structure output accordingly.

Prompt Templates & Libraries

As organisations scale prompt usage, they often develop prompt libraries and templates (for example using tools like AIPRM) so prompt engineers don’t recreate from scratch each time. Templates standardise roles, tasks, context and formats, enabling consistency, reuse and faster deployment.

Self-Correction / Self-Verification

Rather than just one pass, you can ask the model to critique its answer, identify errors, or “think again”. For example: “Now review the answer above, point out any potential mistakes and refine it.” This prompts a chain-of-verification (CoVe) or self-audit sequence, improving reliability. This is increasingly used as models grow more complex and stakes are higher.

Retrieval-Augmented Generation (RAG) integration

Advanced prompt engineering is often combined with retrieval systems (see the dedicated section). In 2024 many organisations integrate vector-DBs, knowledge bases and augmented prompts to ground the model to factual data. The prompt now includes retrieved context, citations, and instructions to not hallucinate.

Hybrid & Multi-step workflows

Rather than a single prompt → answer, you may orchestrate multi-step prompt chains: first retrieval, then summarisation, then reasoning, then output formatting. You may orchestrate agent-style flows: ask the model to plan, then execute, then critique. These workflows require advanced prompt design, checkpointing, iterative prompting and combining results.

These advanced techniques enable more robust, accurate and scalable prompt engineering suitable for enterprise-grade usage.

Chain-of-Thought Prompting

What is Chain-of-Thought (CoT) Prompting and how does it improve LLM reasoning?

Chain-of-Thought (CoT) prompting is a technique where you encourage the model to generate intermediate reasoning steps before arriving at the final answer. Instead of asking directly “What is the answer?”, you ask the model to “think through the steps, then provide the answer.” (IBM)

Why it matters:

Many LLMs perform better on complex tasks (arithmetic, logic, commonsense) when they are prompted to break down their reasoning. For example, a large model when given CoT prompts can outperform fine-tuned models on the GSM8K benchmark. (arXiv)

CoT gives transparency: you see how the model reasoned, can audit the steps, diagnose errors.

It reduces “jumpy” answers: instead of jumping straight to the final answer, the model works step-by-step.

How to apply:

Zero-shot CoT: simply append instruction like “Let’s think step by step.” to your prompt. (arXiv)

Few-shot CoT: include examples where each input has an explanation chain, then output. Then ask the target question. (prompthub.us)

Variants: Tabular CoT, Contrastive CoT (showing correct vs incorrect chains), Plan-and-Solve CoT (plan subtasks then solve). (arXiv)

Limitations:

Requires sufficiently large model (if model is too small, the reasoned chain may look plausible but be wrong) (prompthub.us)

Chains may be coherent but not faithful to how the model derived the answer – so transparency is limited. (arXiv)

In summary, if you are tackling tasks where reasoning, logic, multiple steps matter (e.g. business case analysis, forecasting, decision support) then using CoT prompting is one of the most impactful techniques in your toolkit.

Chain-of-Verification Reduces Hallucination in Large Language Models (Dhuliawala, et al. 2023 – referenced in snippet)

Prompt Engineering for Content Creation

Prompt engineering is especially valuable for content creators, marketers, SEO specialists, social media managers, bloggers (like you) and anyone who needs to produce high-quality written or multimedia content. Here’s how to apply it:

- Define the role, task, context, format (see next section) in your prompt: e.g. “You are a senior SEO copywriter. Write a 1,200-word blog post on prompt engineering targeted at digital marketers. Use headings, bullet points, include relevant keywords (‘prompt engineering’, ‘LLM prompting’, ‘RAG’, etc.).”

- Use few-shot examples: include one or two mini-examples of tone, structure or format you like. This gives the LLM direction.

- Use structured output: you may ask for sections, meta description, alt text for images, list of keywords, internal link suggestions.

- Incorporate SEO: you can ask the model to generate meta title, meta description, H1, H2s, slug, keywords, and internal/external link suggestions. Example: “Also generate a meta description (max 155 characters) and suggest 5 long-tail keywords.”

Use templates: You can build content prompt templates that you reuse. Example:

Role: Expert sportswear brand marketer

Task: Write a blog of ~900 words for Indian audience about [TOPIC]

Context: brand numberten7 (football-centric activewear)

Format: include intro, three main headings, conclusion, call-to-action.

- Use self-verification: After the content generation ask the model: “Now review the blog you generated, correct any factual inaccuracies and optimise for readability (Flesch-Kincaid grade 8).”

- Leverage multimodality (if available): If you include images or data charts (e.g., about prompt-engineering trends), ask the model to generate alt text or suggest image captions and placement.

By applying prompt engineering for content creation you can significantly increase the speed, quality and relevance of your output.

How to Write Effective Prompts for AI

To write effective prompts you should treat the prompt like a specification: it has a role, a task, context and format. Here is a checklist:

Key components of an effective prompt (Role, Task, Context, Format)

- Role: Define who the model is in the prompt. e.g. “You are a senior data analyst”, “You are an AI prompt engineer”, “You are a SEO specialist for blogging”.

- Task: What you want the model to do. e.g. “Write an executive summary”, “Generate JSON output”, “List five use cases”.

- Context: Background information the model needs to know. e.g. “The audience is marketing managers in India”, “This brand numberten7 sells football boots and activewear”, “The task is to help reduce hallucinations by adding retrieval context.”

- Format: How the output should be structured. e.g. “Provide headings and bullet points”, “Produce YAML output”, “Length: approx 800 words”, “Include keywords under 2% density”.

When you make these elements explicit you guide the model and reduce ambiguity.

Additional tips

- Use clear and specific instructions rather than vague commands.

- If you want structured output (table, JSON, bullet list) say so.

- If you want constraints (tone: friendly, technical, list length) include them.

- If you plan to iterate, you can ask the model to suggest improvements or ask for clarifications.

- Test and iterate your prompt: tweak wording, adjust context, examine model responses.

- For complex tasks, break them into subtasks (plan → information retrieval → reasoning → output).

How to write prompts for structured data output (JSON/YAML) from LLMs?

When you want output in a machine-readable format, you can ask for JSON or YAML. Example:

Role: Data engineer

Task: Produce a JSON object summarising the blog post on prompt engineering

Context: Audience = digital marketers

Format: JSON only, with fields: title, wordCount, keywords[], metaDescription, headings[]

Tips:

- Use “JSON only, no explanation” or “YAML only” if you want strictly machine-readable output.

- Define field names and data types so the model knows what to output.

- Provide an example if needed (few-shot).

- Validate the JSON (e.g., ask the model “Validate this JSON conforms to schema” or run in code).

- Use indentation and consistent quoting in prompts to minimise parsing errors.

- If you have multiple items, instruct it: “Return an array of objects”.

Example output:

{

“title”: “Prompt Engineering: Mastering the Art of LLM Interaction”,

“wordCount”: 1200,

“keywords”: [“prompt engineering”, “LLM prompting”, “RAG”, “chain of thought prompting”],

“metaDescription”: “Discover how to craft effective prompts for LLMs, advanced techniques like RAG and Chain-of-Thought, and build your career as a prompt engineer.”,

“headings”: [“Introduction”, “LLM Prompting”, “ChatGPT Prompting”, “Prompt Engineering Techniques”, “Conclusion”]

}

By specifying structure, you make it much easier to integrate the LLM output into apps, dashboards or pipelines.

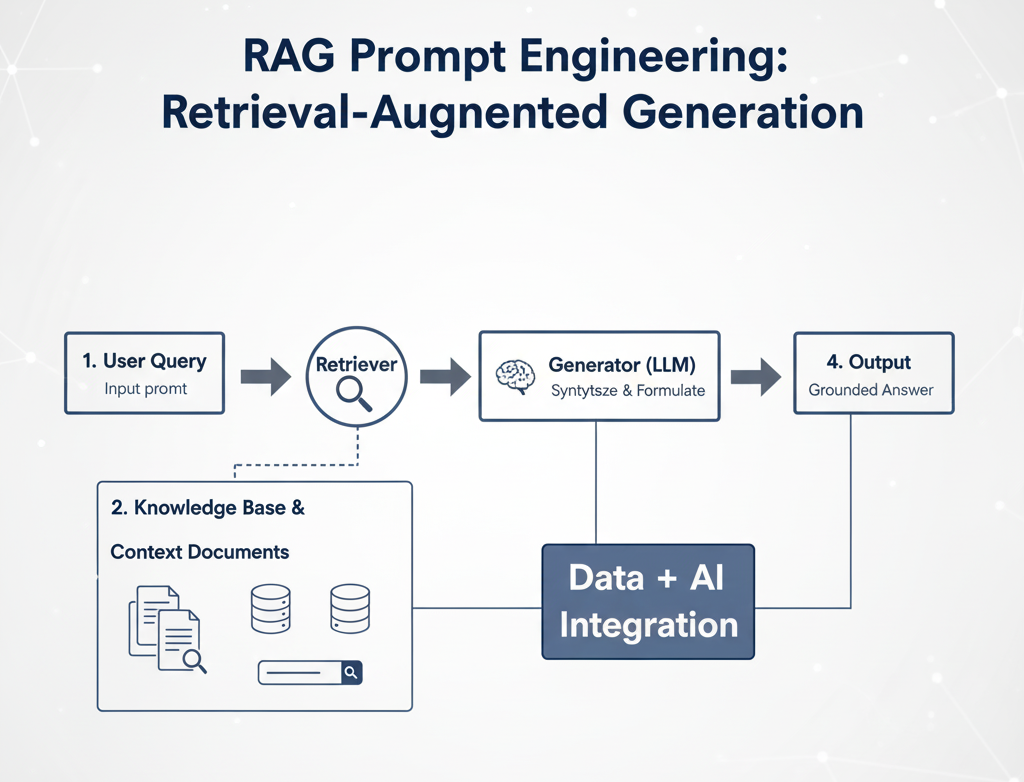

RAG Prompt Engineering (Retrieval-Augmented Generation, a major current trend)

How can I use the RAG (Retrieval-Augmented Generation) framework to prevent AI hallucinations?

RAG stands for Retrieval-Augmented Generation. It is a framework where you combine an information retrieval system (e.g., vector store, knowledge base, documents) with an LLM so that the model is grounded in factual data rather than relying solely on its pre-training. (Amazon Web Services, Inc.)

How it works (simplified):

- The user submits a query/prompt.

- The retrieval component retrieves relevant documents (from internal KB, uploaded files, vector DB).

- The prompt is augmented with the retrieved context (e.g., “Here are three documents. Use them to answer the question.”) (k2view.com)

- The LLM generates the answer, using the retrieved info plus its own knowledge.

- Optionally the system verifies or cites sources.

Why it helps prevent hallucinations:

- The LLM is forced (via prompt engineering) to reference included documents rather than inventing facts.

- Especially useful for domain-specific applications where knowledge is proprietary or newly updated. (promptingguide.ai)

- You can design the prompt to instruct: “Only answer based on the following documents.

- If uncertain, say ‘I don’t know’.”You can include retrieval metadata (source names, publication dates) and ask the model to cite them.

Prompt design tips for RAG:

- After retrieval embed the context near start of the prompt; be mindful of token limits (especially when using long context windows).

- Use formatting so LLM knows which text is retrieved context vs. new instruction.

- Define the task: “Use the retrieved documents to answer, do not hallucinate.”If model supports it, instruct it to add citations like [Doc1, p.2].Use self-verification: “Now check the answer against the documents and highlight any assumption or missing citation.”

- Monitor retrieval quality: irrelevant docs can still lead to bad output, so retrieval + prompt engineering matter. (stackoverflow.blog)

In short: RAG + prompt engineering = stronger accuracy, better grounded outputs, fewer hallucinations.

Zero-Shot vs Few-Shot Prompting

When should I use Few-Shot Prompting over Zero-Shot Prompting?

This is a core decision for prompt designers.

Zero-Shot Prompting: You ask the model directly, no example pairs, just an instruction.

- Good when task is straightforward and well-specified.

- Quick to set up.

- Might yield lower accuracy on complex tasks because the model has no “example” to mimic.

Few-Shot Prompting: You include several (few) example input→output pairs in the prompt before your actual task.

- Useful when: the task is non-trivial, needs a specific format, reasoning steps, or the audience expects high quality.

- Examples help the model understand how you expect the answer, not just what.

- For chain-of-thought reasoning, few-shot CoT can outperform zero-shot CoT. (promptingguide.ai)

So ask yourself:

- Is the task simple and direct? → Zero-shot may suffice.

- Does the task require logic, multiple steps, specialized format, or domain knowledge? → Use few-shot prompt.

- Are you optimising for highest quality vs speed? Few-shot often gives better quality but is more work.

In practice: Many prompt engineers start with zero-shot, check the output, and if performance is insufficient they move to few-shot by adding 1-5 example pairs.

(Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks (Lewis, P., et al., 2020)

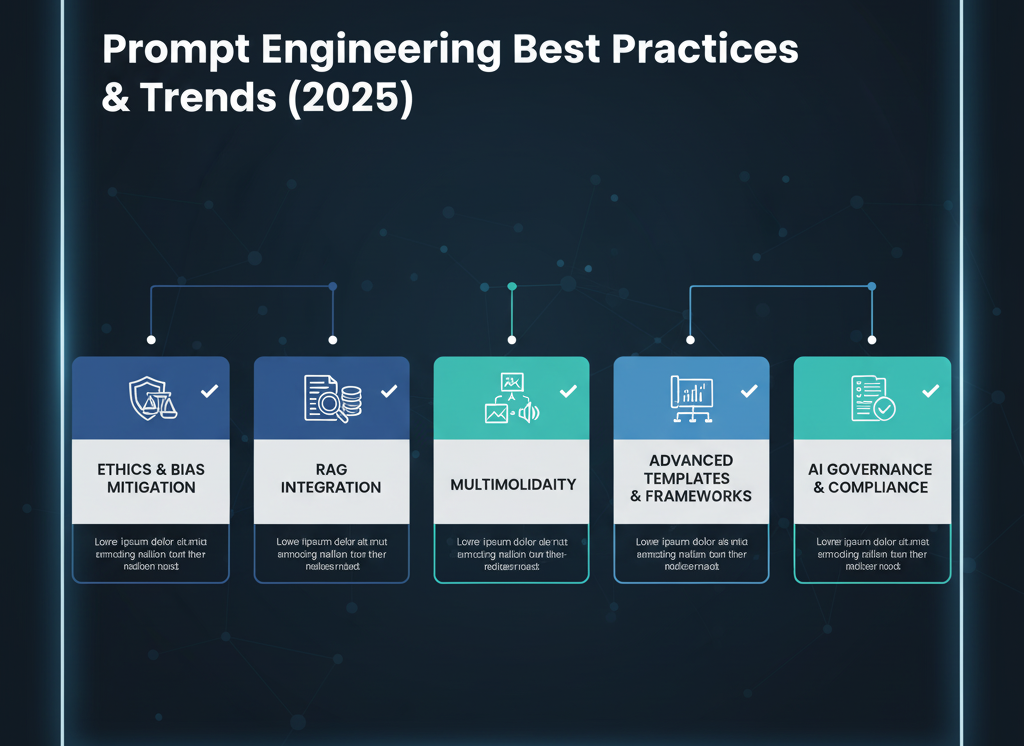

Prompt Engineering Best Practices

Here are some distilled best practices to keep your prompt engineering robust and scalable:

- Be explicit: Specify role, task, context, format. Avoid ambiguity.

- Iterate and test: Track prompt modifications, test responses, measure quality.

- Keep prompts manageable: Even with larger context windows, unnecessary verbosity can confuse the model; focus on relevant context.

- Use structured formats when needed: For content creation, JSON/YAML output, tables, etc.

- Include error/verification steps: Ask the model to self-review or to list assumptions.

- Ground the model: Especially for factual outputs, prefer RAG or include citations.

- Use template libraries: Maintain a library of well-performing prompts you can reuse.

- Watch for bias & ethics: Be aware that your prompt may introduce bias or lead to unethical responses. (See ethical section below.)

- Document versioning: As your prompt evolves, track versions, results, and best-performing variants.

- Stay up-to-date: As models evolve (e.g. longer context, multimodal, retrieval integration), review and refine prompt approaches.

Prompt Engineering Guide (IBM’s Comprehensive Guide)

Current Trends in Prompt Engineering

To stay relevant in 2025 and beyond, be aware of these industry developments:

- Growing Context Windows: Newer LLMs support thousands of tokens of context, enabling longer documents, deeper chains and richer context.

- Multimodality: Prompting now extends beyond text. Models may accept images, video, audio and you must engineer prompts that combine modalities.

- Prompt Templates & Libraries: Organisations are standardising prompt designs, versioning them, sharing internally and building prompt-management systems (e.g., template libraries, AIPRM).

- Self-Correction / Self-Verification: Techniques such as Chain-of-Verification (CoVe) where the model critiques its own output and refines it are gaining traction.

- RAG (Retrieval-Augmented Generation) is mainstream: More systems integrate retrieval + generation to ground responses in up-to-date factual data, reducing hallucinations.

- Agentic / Multi-Step Prompting Workflows: Instead of single prompts you now frequently see prompt chains: planning → retrieval → reasoning → output → review.

- Prompt Engineering Ethics & Bias Mitigation: As LLMs are used in more domains (marketing, HR, legal, healthcare) prompt engineers must carefully consider fairness, bias, privacy, transparency.

- Enterprise Prompt Governance: Organisations are creating prompt governance frameworks: version control, auditing prompts, monitoring output for compliance and security.

By aligning your prompt engineering practice with these trends, you position yourself at the cutting edge and ensure your solutions scale and remain future-proof.

NeurIPS 2024 Conference

Conclusion

Prompt engineering is no longer a novelty, it is a foundational skill in the age of AI. From the basics of LLM prompting to advanced techniques like chain-of-thought and retrieval-augmented generation (RAG), the ability to craft high-quality prompts is something that content creators, marketers, analysts and developers must master. As an aspiring prompt engineer (or someone integrating AI into workflows), you do not necessarily need to be a hardcore programmer, but you do need strong domain knowledge, logical thinking, and a willingness to iterate and refine.

The field is evolving fast: longer context windows, multimodal capability, prompt-template libraries, self-verification and retrieval systems are shaping how we design prompts today. If you invest in building prompt-engineering skills now, you will be well-positioned in your career and capable of unlocking the full potential of LLMs for business, content, analytics and automation.

By applying the role-task-context-format checklist, using few-shot where appropriate, leveraging structured output and retrieval workflows, and following best practices (including ethical guardrails), you can craft prompts that deliver reliable, high-value outputs. Embrace prompt engineering not just as “typing instructions into a model” but as designing a system, a workflow and a bridge between human intent and machine logic. You will gain authority, consistency and trust in your AI-powered workflows, and your readers, stakeholders or clients will see you as a knowledgeable expert in this space.

Also, check out AI Shala’s pick for the top 10 free AI tools in 2025.

Frequently Asked Questions

Role: defines who/what the model is (e.g., expert, assistant)

Task: defines what it needs to do (e.g., summarise, list, analyse)

Context: the background information needed (e.g., brand details, audience, domain)

Format: how the output should appear (e.g., “Provide JSON”, “Use headings and bullet points”)

When all these are explicit, your prompt is clearer and more likely to yield the desired output.